Agentic Coding Training

Program reference · EasySpecs Labs

Introduction

Train your team to leverage AI agentic coding at scale.

Joint training for POs, designers, and developers: align the team so AI-driven code generation compounds — higher throughput across the codebase.

The 100x developer is a trajectory,not a dream.

The curve is steep.

The compounding is real.

We put your team on it in four days.

Barcelona · Remote or on-site · easyspecs.ai

The curve is already running.Your team may not be on it.

The engineers who started learning agentic workflows 12 months ago are now operating at levels their peers can't match.

Not because they type faster. Because they've built a factory.

Every quarter you wait, the gap compounds.

The cost isn't in the license spend.It's in the teams who got on the curve before yours.

Compounding,not overnight.

The 100x developer builds a factory.

Month over month, the factory compounds.

We don't promise 100x in four days.

We put your team on the curve that gets there.

Four days. One team.The curve.

We spend four days inside your codebase with one team.

They leave operating at the curve, not guessing at it.

Context pack

Patterns library

Review & verification norms

Replication kit

Everything versioned in your repo.

No content silos. No ongoing retainer.

Inside the workshop.

Where you are

Baseline diagnostic. Individual skill calibration.

Most teams discover the gap is wider than they thought.

The first workstations

Real tickets from your backlog.

Your team builds their first production-line workstations — refactors, tests, debugging, feature work.

The hard cases

Legacy code, ambiguous requirements, when the agent is wrong.

Your seniors redefine their role as factory architects, not typists.

The factory ships

Playbook ratified. Replication kit handed over.

Team presents working artifacts to their own leadership.

Three formats.Start where you need to.

| Compare | Catalyst | Development Team Workshop | Custom Team Immersion |

|---|---|---|---|

| Format | Half-day, leadership | 4 days, one team | 4 days + pre-engagement |

| Who | Managers, directors & executivesOpen attendance — leadership alignment | Developers, senior ICs & tech leadsHands-on delivery — up to 15 participants | Roles you need — engineering, platform, risk, etc.Team size & mix defined when we scope together |

| Best for | Pre-commitment alignment | Standard pilot team | Regulated stacks, multi-role depth |

| Investment | €1,249 | €4,995 | €16,800 |

Most teams start with the Workshop. Catalyst is for leadership alignment before commitment.

Immersion is for regulated or multi-role contexts.

All three teach the same curve — the difference is breadth and depth, not substance.

Capability stays.We don't.

During

Your team builds working agent loops on your actual backlog.

Artifacts check into your repo as we go.

At handoff

Playbook, patterns library, and replication kit live next to your code.

Your team presents results to your leadership, not us.

Two weeks later

One wrap-up call. Written recommendations on what stuck, what drifted.

No subscription, no retainer, no content silo.

Your next team runs this without us. Or you bring us back.Your call.

One call.Start the curve.

A 25-minute strategy call.

We scope your pilot team, align on success signals, and give you workshop dates.

You leave with enough to defend the plan internally.

Xesca Alabart · xesca.alabart@gluecharm.com · easyspecs.ai

A tool license won't help if the team can't use it together

Buying Cursor, Antigravity, or Copilot does not install org-wide code generation velocity. Without shared pipelines — what agents generate, from what source of truth, under what release bar — you get parallel chats and local spikes that flatline when everyone ships together. We add structure so agent output scales with your team and merge cadence, not just faster individual cycles.

One vocabulary for the whole team — one bar for quality.

agentic coding and agentic engineering only scale when product, design, and engineering agree where truth lives, what “done” means for an agent run, and how you verify before merge. We run joint sessions so prompts, tools, and hand-offs match — no hero prompts nobody else can run.

Individual use

Models are everywhere; a practiced, org-wide habit for agentic coding before merge usually is not.

- Defaults and “what worked for me” stay in one engineer — teammates reverse-engineer prompts from threads and screenshots.

- Reviews slow down when every agent PR feels like a one-off black box instead of the same org-wide pattern.

- PO, design, and engineering never build a shared habit of agent-ready inputs — intent stays meeting-only while tickets stay vague.

- New hires relearn from shoulder taps because nothing repeatable lives next to the code they actually ship.

- Licenses renew org-wide while habits stay personal — merge throughput still resets every quarter.

Workflow at scale

Joint training aligns POs, designers, and developers on how agentic coding compounds at real merge volume.

- The whole team rehearses the same repo-native context, rules, and verification rituals you already demand before merge.

- Exercises mirror your backlog and cadence so practice matches reviewer load — not isolated green-field demos.

- Cross-functional sessions tie backlog, UX intent, and implementation so agentic engineering pulls one source of truth.

- Runbooks and worked examples live where engineers work — onboarding scales with headcount, not tribal broadcast.

- Vendor-neutral patterns so switching IDE or model does not reset how the org ships agent output together.

What you leave with (inside your systems)

Working patterns in your environment — not slide decks. People who have shipped production systems sit on your real tasks so agentic coding matches how you release today.

Context you can trust

- Repo rules, specs, and traces layered so every agentic coding session starts from the same facts.

- Agreement on what agents may assume and where truth lives — written down, not tribal.

- A refresh habit when reality drifts, before work ships, so context stays trustworthy.

Agentic coding across the SDLC

- Discovery, refinement, implementation, review, and release each get explicit prompts, tools, and verification.

- Traceable hand-offs so agentic engineering matches your governance — no opaque gaps between tools.

- Judgment stays human-led where it must; automation earns scope instead of pretending to replace sign-off.

Rituals, not heroics

- Lightweight rituals for reviewing agent output, tightening prompts, and retiring experiments that failed.

- Shared defaults for when to lean on automation versus when a human must sign off.

- Practices that survive Monday morning — not one-off heroics from a single power user.

Architecture that agents can read

- Boundaries and APIs clear enough for models and engineers to infer the same contracts.

- Docs and structure that scale legibility as system surface area grows.

- Safety from clarity: fewer surprises when agents touch more of the stack.

How a typical engagement runs

From discovery to light follow-up — four clear phases so your team always knows what happens next.

- Discovery & constraints

We map stack, compliance, and where agentic coding already leaks time. You pick success signals with your leads so the workshop hits real bottlenecks — not toy examples.

- Hands-on workshop days

3–4 days on your codebase: design prompts, wire MCP where it earns its keep, and run review loops together. You ship small, real improvements during the block so habits survive Monday morning.

- Ship into the repo

Artifacts land as Markdown, rules, and scripts next to the code — versioned like any other change. Your team keeps owning the system; we do not gate knowledge behind a separate subscription product.

- Light follow-up

A concise wrap-up call and written recommendations — optional deeper mentoring only if you ask for it. No bundled “content subscription”; your materials live with you.

See the workflow before you fund the workshop

Book a free 60-minute live session: we run agentic coding and agentic engineering on your repo or a reference stack — no slide deck, no product pitch, just the loop and Q&A.

Pricing and Workshops

Catalyst leadership

€1,249

Half-day – leadership cohort (unlimited participants)

For managers and leads who need a shared picture before funding a multi-day block. We connect market pressure, quality risk, and what professional agentic coding and agentic engineering require — then you leave with a clear go, park, or scale decision.

Includes

- Half-day interactive session with your leadership cohort

- Framing agentic engineering for skeptical senior staff

- Concrete before/after examples from similar stacks

- Decision checklist: when agentic coding is appropriate vs. risky

- Clear next-step options (workshop, immersion, or pause)

Recommended

Development team workshop

€4,995

3–4 days – up to 15 participants

The hands-on format most teams start with: your engineers build working agent loops, layered context, and review rituals directly on your repository — led as serious engineering work, not generic tool tips.

Includes

- 3–4 calendar days on your codebase and toolchain

- Curriculum below: context engineering, SDLC agents, team practices, architecture for AI

- Product and design join for context exercises where it helps; engineering owns implementation depth

- Artifacts checked in: prompts, rules, MCP wiring, and a starter playbook

- Human-led verification on merge-worthy work — one professional bar for the whole team

Maximum depth

Custom team immersion

€16,800

3–4 days

When your risks and workflows need more than a standard run: we shape the block to your company, then combine shared whole-team time with private sessions per role so each function gets a dedicated deep dive — not only a single mixed track. The development team workshop stays deliberately coding- and implementation-led; the immersion adds that common-plus-individual structure so depth is higher across roles. Still 3–4 days with everything versioned in your repo.

Includes

- Curriculum and exercises scoped to your priorities, stakeholders, and product risks

- Shared sessions for cross-role alignment plus private breakouts per role for role-specific depth

- Maximum live work on your repository with merge-worthy artifacts as we go

- On-site or remote delivery

- Context packs, prompt libraries, and review rituals you keep

- Written playbook + implementation notes checked into your repo; additional focused days at €4,200 each if you expand scope later

Development team workshop curriculum

What your team achieves

Led by practitioners with 20+ years of architecture and engineering experience — not AI tool trainers. Hands-on with your actual codebase and product challenges. Your team builds working agents, AI-powered workflows, and practices they deploy immediately.

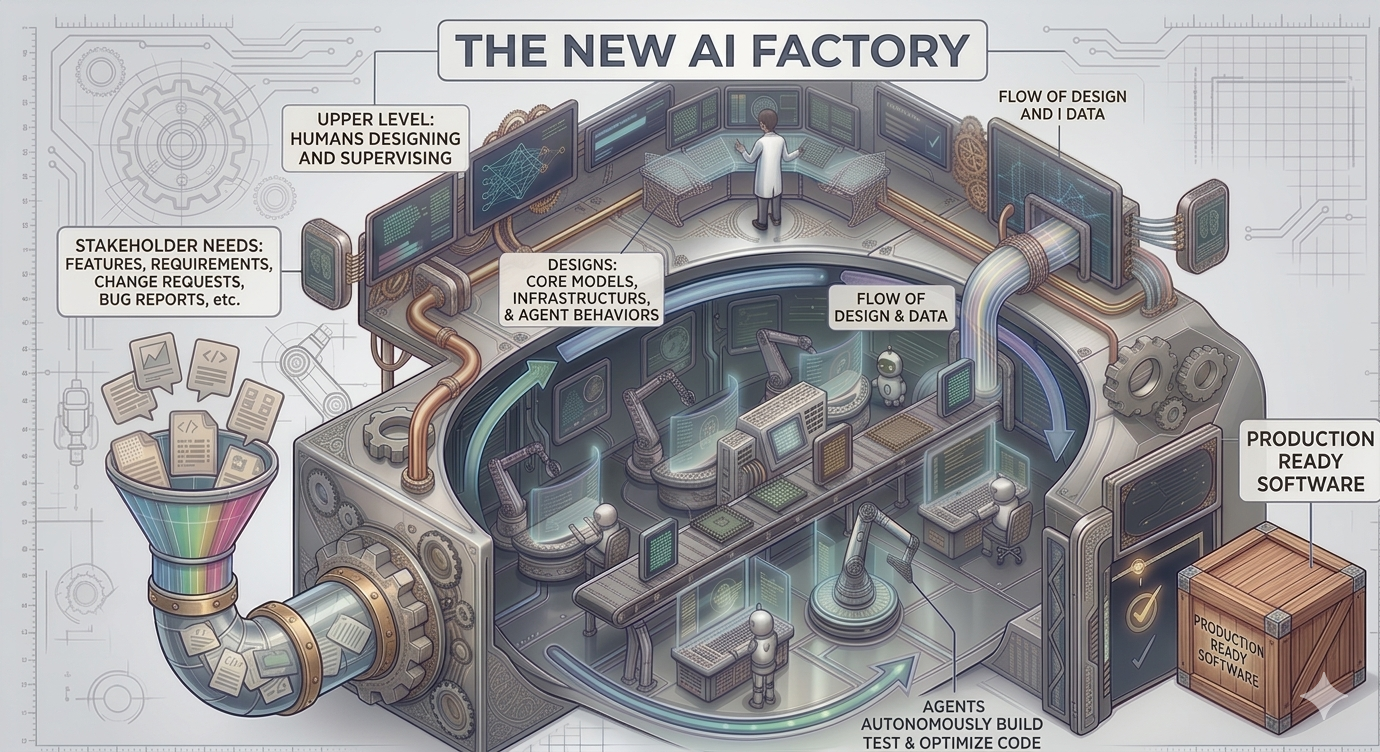

AI factory pattern: production line and workstation

We frame serious agentic coding with industrial metaphors you can teach and repeat. Together they describe a non-interactive way of working: you spend your time hardening how software is produced, not hand-authoring every variant of the product.

AI factory design pattern

In AI-assisted development, the factory is what you version next to the code: rules, MCP tools, sub-agents, policies, and verification hooks that manufacture artifacts—patches, tests, migrations, docs—from structured intent. You implement and review the factory; the factory implements the feature-shaped output.

Production line

The production line is the ordered pipeline through your workstations: each step declares inputs and outputs (for example story → technical plan → patch → tests → human gate). Because the sequence is explicit, you can re-run the same line when priorities change and still land production-ready diffs.

Workstation

A workstation is one narrow station on the line: a scoped agent run with its own prompt pack, tools, and acceptance checks—generate contract tests for this module, refactor under these invariants, summarize this delta for reviewers. Small blast radius, inspectable results.

Non-interactive delivery and changing scope

Instead of “chat until it looks right,” teams invest in factories and lines that run with minimal babysitting—closer to a build than a conversation. When feature scope shifts, you do not chase the old hand-written path: you adjust factory inputs or a workstation, then repeat the process so the line emits a new production-ready solution. That is how delivery speed and flexibility compound as requirements move.

Context engineering foundations

Your infrastructure makes any AI tool work reliably. Your multi-layer context architecture becomes your foundation. Your product teams structure domain knowledge as AI context. Your developers configure tools, MCPs, and sub-agents for consistent results. Your configurations are documented, customized, and ready to deploy immediately.

Agents for every development phase

Your agents work through every development phase. Your POs produce complete specs from user stories. Your planning catches dependencies early. Your implementation follows standards consistently. Your reviews identify improvements systematically. Your legacy migrations gain momentum. Every phase becomes coordinated.

Team practices & change management

Your entire team transforms how they work together across development and product roles. Your practices refine sprint by sprint. Your team recognizes when to adapt agents and discovers new patterns. Your custom playbook and 90-day checklist guide your next steps.

Architecture & quality at scale

Your patterns work brilliantly with AI. Your complex systems simplify into clear boundaries agents understand. Your APIs eliminate ambiguity. Your documentation feeds context naturally. Your architecture becomes the foundation that makes AI reliable, not a constraint.

Agentic Coding Coaching

Workshops are coached by senior EasySpecs practitioners — product, structured requirements, and platform architecture at the table with your team, not junior facilitators reading slides.

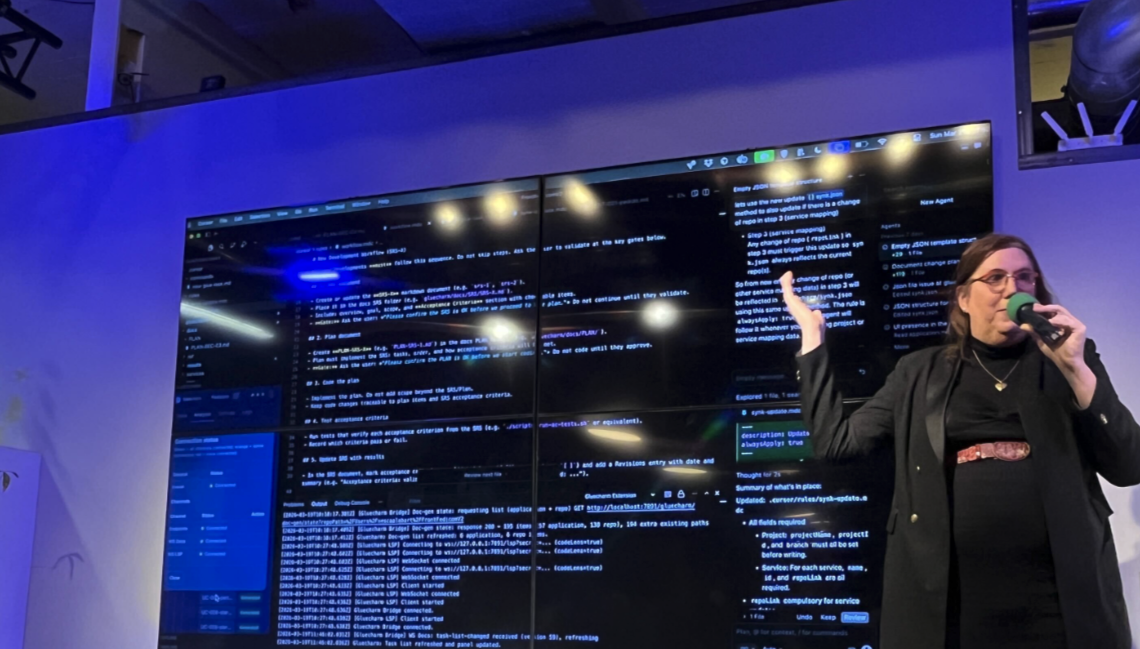

Xesca Alabart

Lead coach — product & requirements · CEO, EasySpecs

Xesca combines product leadership with requirements engineering, helping teams turn business intent into explicit objectives and acceptance signals agents can work against—not vague chat prompts. She facilitates mixed rooms of engineering, product, and design while keeping a sharp definition of what “done” means.

- Aligns engineering, product, and design without diluting technical depth

- Brings structured requirements practice into AI-assisted delivery

- Based in Barcelona; delivers in English, Spanish, or Catalan as needed

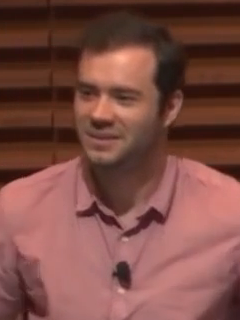

Carlos Guirao

Carlos Guirao Capistany

Lead coach — platform & agent workflows · CTO, EasySpecs

Carlos architects EasySpecs’ platform and AI systems with an emphasis on safe, reviewable change in mature codebases. He focuses on boundaries, APIs, and verification so agentic engineering produces diffs your team can trust—not opaque churn.

- Systems and integration architecture for complex products

- Hands-on with agent workflows, tools, and how they land in your repository

- Leads the technical spine behind Application Mapping and agent-ready context

Reference · SDD

Specification-Driven Development (SDD)

SDD usually means Specification-Driven Development. Some people say “design-driven”; the label most teams use is Specification-Driven Development.

SDD is a methodology where the specification is the primary artifact of the software process — not the code. Code is derived, regenerated, or validated against a rigorously maintained spec. That is the thesis behind Gluecharm and easyspecs.ai: structured intent stays in charge while agents handle implementation volume.

- Intent leads

- Code follows structure

- Agents absorb volume

Core idea

Traditional development

- Requirements flow into code through a lossy translation.

- Code becomes the source of truth.

- Specs rot, drift, or never existed in an executable or machine-readable form.

Specification-Driven Development

- The specification is the source of truth — structured, versioned, machine-readable.

- Code is derived, regenerated, or checked against the spec.

- Changes start at the spec layer first, then propagate to implementation.

Executable shape

The spec is expected to be precise enough to run or verify — not a vague Word document, but a structured artifact (often a graph, DSL, or typed schema) covering features, scenarios, data models, services, and constraints.

Why it is resurging now

Before LLMs, SDD lived mostly in formal-methods circles (TLA+, Alloy, B-Method, Event-B): rigorous but high-friction, so it stayed in aerospace, finance, and protocol design. With agentic coding, the economics flipped. Implementation is increasingly cheap — models and IDEs can generate thousands of lines on demand — so the bottleneck moved upstream to “what exactly do we want built?” A fuzzy prompt produces fuzzy code at massive scale. A precise specification produces consistent, regeneratable code. That is the Agentic Conf Hamburg thesis: the missing layer between intent and merges.

Typical components of an SDD workflow

- Structured spec artifacts — features, use cases, scenarios, views, services, data entities, and non-functional requirements (for example a five-scope impact analysis).

- Change requests as first-class objects — spec edits are tracked, reviewed, and impact-analyzed (including CR “animal sizing” from mouse-sized to dinosaur-sized change).

- Bidirectional traceability — lines of code map to requirements; requirements map to tests and implementation.

- Impact analysis — given a change request, know which features, services, entities, and views move before anyone edits code.

- Generation and synchronization — agents produce or update code from the spec; discovery flows can rebuild or align the spec from existing code (for example OpenCode discovery).

How SDD differs from neighbors

Same industry, different source of truth and precision bar.

| Approach | Source of truth | Precision |

|---|---|---|

| Vibe coding | Chat history | None |

| TDD | Tests | Behavioral only |

| BDD | Gherkin scenarios | Behavioral, human-readable |

| DDD | Domain model | Conceptual |

| Model-Driven Engineering | UML / metamodels | High but heavy |

| SDD | Structured specification | High, executable-adjacent |

| Formal methods | Mathematical spec | Provable |

Reference · Field notes

Why we cite Andrej Karpathy

- Interface → habit

- Oversight returns

- Durable surfaces

His public arc tracks how the field matured: natural language as the interface, then “vibe coding” for throwaways, then professional agentic engineering with oversight — and lately the “compile, don’t just retrieve” pattern behind durable specs. Below are attributed lines from that thread (plus one community synthesis of his LLM Wiki framing). The practice is what we install with your team:

“The hottest new programming language is English.”

Jan 2023 — English as the interface

Andrej Karpathy, X (Jan 24, 2023)

“There's a new kind of coding I call 'vibe coding', where you fully give in to the vibes, embrace exponentials, and forget that the code even exists.”

Feb 2025 — vibe coding

Andrej Karpathy, X (Feb 2, 2025)

“Suddenly, everyone is a programmer because everyone speaks natural language like English. This is extremely bullish and very interesting to me and also completely unprecedented.”

June 2025 — YC AI Startup School

Andrej Karpathy, quoted in coverage of his YC AI Startup School keynote (e.g. mlnotes.substack.com)

“Today (1 year later), programming via LLM agents is increasingly becoming a default workflow for professionals, except with more oversight and scrutiny. The goal is to claim the leverage from the use of agents but without any compromise on the quality of the software.”

Feb 2026 — agentic engineering

Andrej Karpathy, one-year retrospective on X (Feb 2, 2026)

“Many people have tried to come up with a better name for this to differentiate it from vibe coding, personally my current favorite 'agentic engineering': 'agentic' because the new default is that you are not writing the code directly 99% of the time, you are orchestrating agents who do and acting as oversight. 'engineering' to emphasize that there is an art & science and expertise to it.”

Feb 2026 — why the new name

Andrej Karpathy, same retrospective on X (Feb 2, 2026)

“The correct way to use LLMs is not Q&A, it's compilation.”

LLM Wiki pattern — compilation

Summarized baseline of Karpathy’s LLM Wiki gist (github.com/karpathy/442a6bf555914893e9891c11519de94f); phrasing from community writeups of the pattern

“Ideas outrank Code — Your wiki of decisions and formulas is worth more than the code it generates.”

Positioning note (community synthesis)

Community principle derived from Karpathy’s LLM Wiki paradigm (github.com/Ss1024sS/LLM-wiki); swap “wiki” for “SRS” and you have the EasySpecs thesis

Training turns that arc into repo habits: orchestration with human oversight, quality gates that do not move, and versioned specs the team treats as the compiled surface agents read — not disposable chat.

Deep Deeper

Learn more about Specification-Driven Development (SDD)

Plain-language primer: what SDD names, how the loop runs, why agentic coding brought it back, how PRDs and SRS-style docs fit as inputs—then contrasts, workflow depth, and Karpathy context.

- Contrasts & neighbors

- Karpathy in context

- One calm read

Questions we hear often

Scope, logistics, and how we plug into your week.

- What is the difference between Catalyst, the development team workshop, and the custom immersion?

- Catalyst leadership (€1,249) is a half-day alignment session for managers and leads before you fund a multi-day block. The development team workshop (€4,995) is our recommended 3–4 day hands-on build centered on engineering and agentic coding depth, with product and design joining for context where it helps. Custom team immersion (€16,800) is also 3–4 days but shaped to your company: shared whole-team time plus private breakouts per role for deeper, role-specific dives — higher overall depth than one mixed implementation track. Workshop and immersion both ship artifacts into your repository.

- Do product people participate, or is this developers only?

- The immersion is built for mixed rooms. Developers focus on agentic coding loops with reviewable diffs; POs and designers focus on upstream context (stories, acceptance signals, prototypes) so agentic engineering does not stop at the IDE. No coding background is required for product roles — clarity is the prerequisite.

- Will this be obsolete next month when models change?

- Models will change; verifiable context, explicit objectives, and disciplined review will not. We teach durable habits behind agentic coding and agentic engineering so your team can swap tools without restarting from zero.

- Remote or distributed teams?

- Yes. Sessions run over video, artifacts land in your repo, and documentation travels with the team. Distributed teams often benefit more because agentic engineering forces implicit knowledge into written context.

- Languages and frameworks?

- Principles are universal; exercises follow your stack — Java, Python, JavaScript, Go, C#, TypeScript, React, Spring, Django, .NET, and more. agentic coding patterns map to your build, test, and review toolchain.

- Minimum team size?

- Useful from ~3 people up to large orgs. Small groups get intensity; 8–15 balances peer learning; bigger roll-outs usually sequence champions after the first immersion.

- Pilot one team first?

- Typical. Prove agentic engineering with one crew, document the playbook, then replicate with additional modules or a second cohort.

- What happens after the last workshop day?

- You keep every artifact in your repository — prompts, rules, checklists, notes. We include a focused wrap-up conversation and written recommendations. If you want ongoing coaching, we scope that separately; there is no bundled content subscription.

- We already pay for Copilot / Cursor — why training?

- A seat gives access; it does not give shared practice. agentic coding still needs context design, verification, and ownership. agentic engineering is how those habits scale across roles. Training aligns the team on the same professional standard instead of ten private workflows.

Still unsure? Email us and we will answer directly.

Agentic team training

Kill the “we’ll decide later” loop in one short call.

Book a strategy call to align scope, tier, and dates — so you can defend the plan internally without another week of email.

- Tier and rollout clarity in the first conversation — not a week of email.

- Workbooks and examples ship beside your code — no extra content silo.

- Quarterly cohort capacity is real — Q3 2026 dates move first.

Next step

Strategy call

~25 minutes on video. Your stack, pilot vs full cohort, and what “done” looks like.

Multi-day blocks are capped each quarter — Q3 2026 dates move first.